Anyone who’s produced a commercial fashion shoot knows the logistics are a nightmare. You’re juggling models, location permits, lighting rentals, weather, and expenses that eat your budget before you’ve even snapped the first frame.

But now, that equation is different thanks to AI. Until recently, AI wasn’t a viable alternative because it couldn’t handle the most important thing: Product consistency. You could get a cool image, but the shoes would look wrong, or the logo would be hallucinated.

That changes with Gemini 3 (Nano Banana Pro). This model has a level of adherence to reference images that we haven’t seen before. It allows us to take real product shots- flat lays or mannequins – and place them on a model in a specific environment with high fidelity.

I’m going to walk you through a workflow in Weavy (aka Figma Weave) that turns a folder of product JPEGs into a high-end editorial spread.

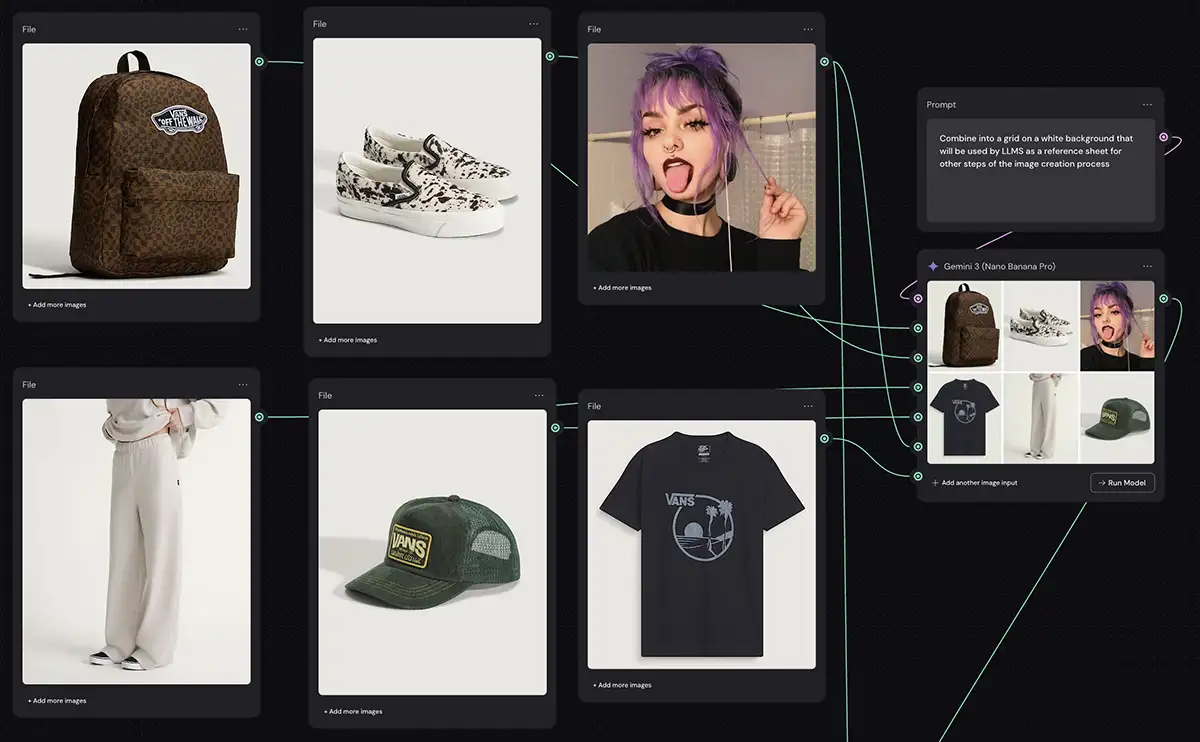

Step 1: The Product/Model Reference Sheet

A big mistake creators make is trying to feed the AI 5-10 different product images separately and hoping it figures out how to wear them all at once. This CAN work – Nano Banana supports up 14 input images – but it just adds more potential failure points.

The better move is usually to create an intermediate reference sheet (example above).

We take our individual assets – the backpack, the slip-ons, the sweatpants, the trucker hat – with the model and we use a simple Gemini 3/Nano Banana node to combine them into a single “collage” image with this prompt:

“Combine into a single image that will be used by LLMS as a reference sheet for other steps of the image creation process”

It doesn’t need to look fancy, since it’s only used as an intermediate step by the next Nano Banana node.

Why this works: We treat this generated collage as our “Source of Truth.” We feed this single image into the final generation node. Nano Banana Pro is exceptional at analyzing a reference sheet and understanding that these items belong together. And it maintains details like the texture of the flannel, the pattern of the shoes, and especially text/logos much better than previous models.

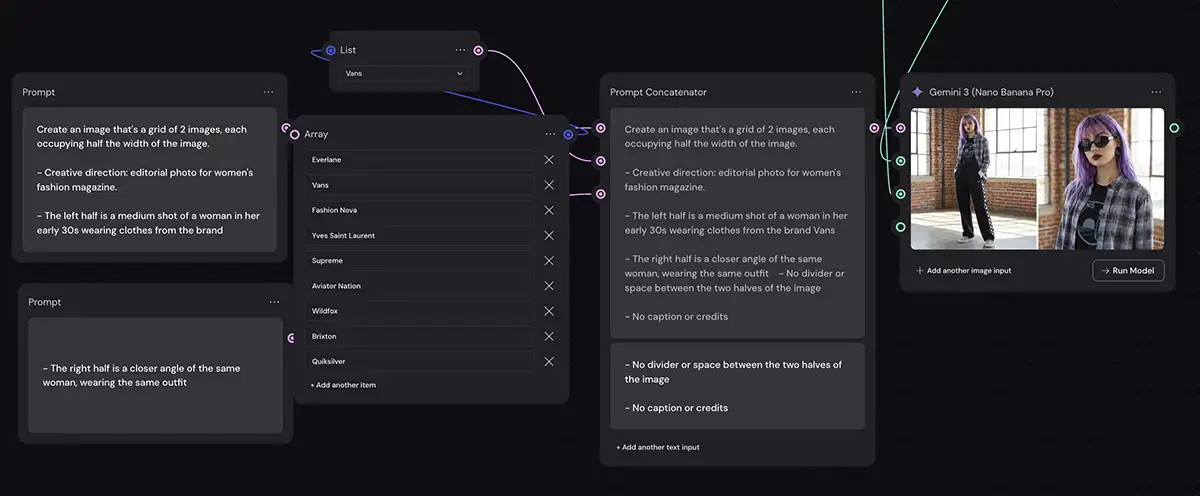

Step 2: The Prompt and Brand Context

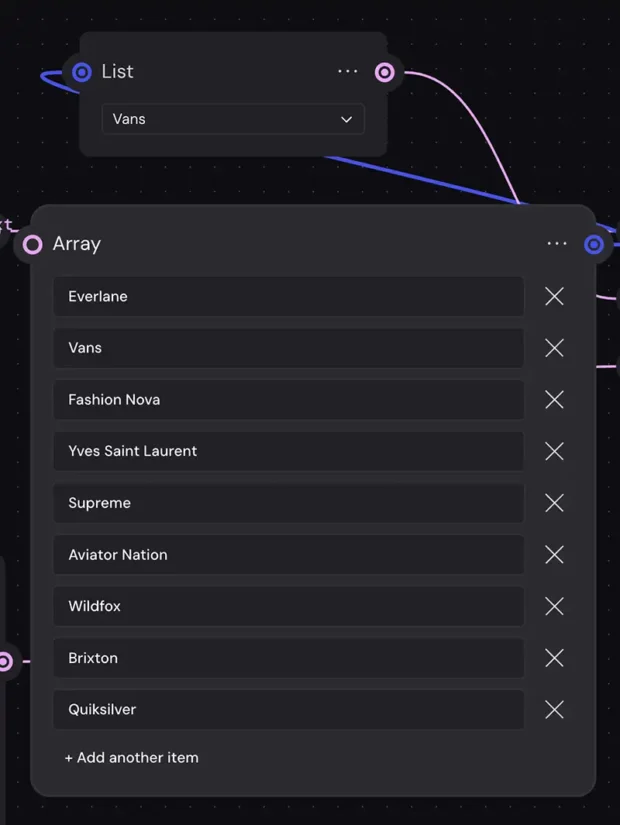

Once we have our product reference, we need to tell the AI how to shoot it. You can just put everything in a single prompt box, but you can make it much more flexible and reusable with a few minutes of setup with Weavy’s list, array and “prompt concatenator” nodes (learn how to use prompt concatenator here in more detail):

And BTW, you should be able to do something similar in other node-based apps like Leonardo, Freepik, Flora, etc. The details will vary, but the same approach should work.

A. Structural Constraints

First, I give the AI strict formatting rules. In this example, I don’t want a standard 1:1 square. I want a wide, cinematic aspect ratio that mimics a magazine spread.

My prompt explicitly asks for:

“Create an image that’s a grid of 2 images… The left half is a medium shot… The right half is a closer angle of the same woman, wearing the same outfit.”

This forces the model to prove it understands the subject by rendering her consistently from two distances in the same frame.

B. The “Brand Hack”

This is the one of the most powerful parts of using a Google-backed model like Gemini. Because Gemini has been trained on the world’s information, it understands the visual language of brands.

Instead of spending hours describing things like (“softbox key light, rim light, urban decay background, boho vibe” etc), you can simply tell Nano Banana the Brand Name.

In my Weavy setup, I have a list node (the yellow box) with brands like Supreme, Vans, Everlane, and Yves Saint Laurent.

-

If I select Vans, the AI knows to use flat lighting, urban environments, and a younger, edgier mood.

-

If I select Yves Saint Laurent, it automatically shifts to dramatic shadows, high contrast, and minimalist sets.

This lets the AI do the heavy lifting on “vibe,” letting you focus on the composition.

Step 3: High-Fidelity Execution

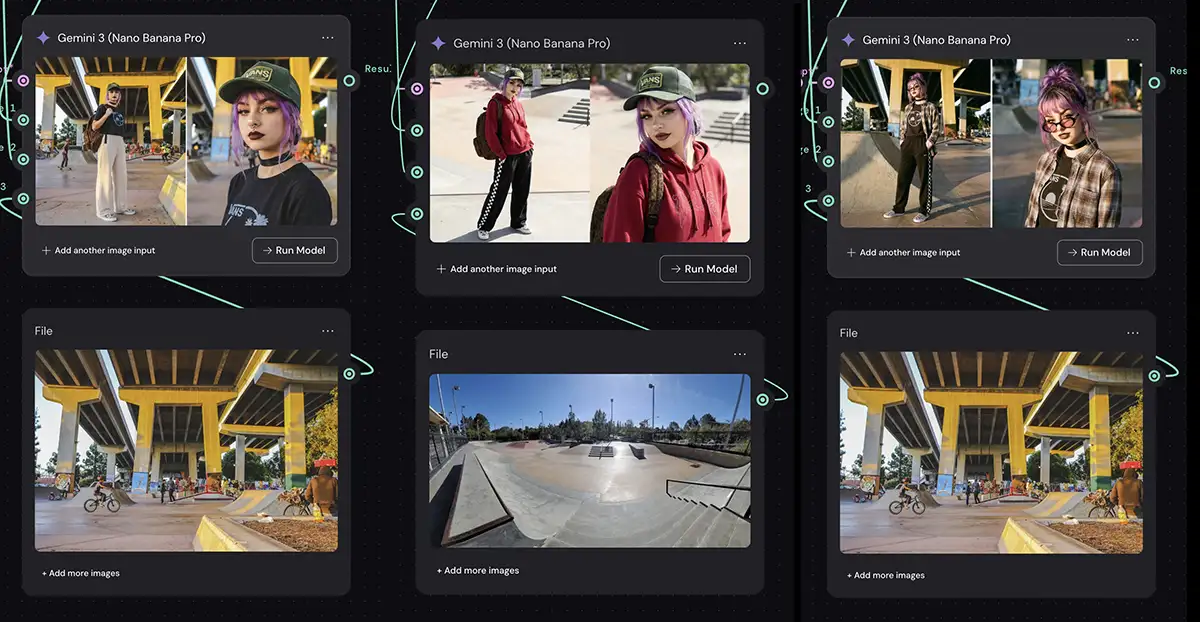

Finally, we feed the Reference Sheet (Step 1) and our Brand/Structural Prompt (Step 2) into the final Gemini 3 Nano Banana Pro node. Just click “run model” and wait a few seconds! If you set everything up correctly above, you should see a finished editorial shot in Weavy.

There is one critical setting here: Resolution.

Because this is a commercial output, standard web resolution doesn’t cut it. Nano Banana Pro now supports native 4k resolution generation within Weavy.

The Result: If you look at the final output in the screenshot, you see the model wearing the exact hat, pants, and shoes from our reference images. The set, lighting, etc are on-brand for Vans.

You’ll probably still want to take this into Photoshop to fine-tune a few things, but you should be 90% of the way there (or even 100%, for some applications).

Adding in some other outfits, and a background reference image

The bottom line

Keep experimenting: connect an additional node to specify the background. Mix and match outfits with backgrounds. Specify the pose, angle, lens, film stock, etc. Try using another image as a style reference. You get the idea… you can get into as much detail as you want, or leave it up to the AI to fill in the blanks.

We are moving from “prompt and pray” to “engineer and execute.”

By setting up a workflow like this, you aren’t just generating random images. You’re building a virtual photography studio. You can swap out the product folder, change the brand from “Vans” to “Gucci” in the dropdown, and generate a completely new campaign in minutes. Repose one of the models if you want.

And most of all, have fun!